2026

No, the chatbot didn't cure that dog's cancer

It’s Easter Sunday. To celebrate, I took the Windrush line up to Hoxton, had festive Swedish meatballs at the Curious Yellow Kafe, then wandered through Shoreditch under that thin, deceptive spring sun London offered today. On the train back south, the miracle arrived in my idle-time phone scrolling. It happened somewhere between Whitechapel and where the internet cuts out when the train goes underground for a few stops. A tech bro cured his dog’s cancer with ChatGPT. It’s an Easter story, neatly packaged for the feed. Like, share, move on. Don’t miss your stop.

Read the story, why do that? There was a preview card. A toothy, smiling white man and his happy Staffy mix. The headline completes the Hallmark Channel story for our times: “He used AI to create a cancer vaccine to save his dying dog.” The ChatGPT logo floats just above the dog’s head, like a halo in a Renaissance painting of a saint. The story completes itself without the need of clicking through, or dealing with cookie consent forms, various pop-ups, a paywall, an e-newsletter subscription request, or whatever else nearly every commercial news site throws at you with javascript. We are living in miraculous times.

This is how most information moves now. Not through articles, but through surfaces. Preview cards, thumbnails, captions. Carefully assembled fragments designed to survive the scroll. The article itself exists, somewhere beneath, but it’s now almost incidental. By the time you might click, you’ve already decided what happened.

And this one lands because it’s engineered to. Cancer does the heavy lifting. The dog does the rest. It disarms you, makes scepticism feel inappropriate. It’s Easter after all.

But here’s the twist! It’s not just medicine, but AI! The bot we can all access to lazily respond to emails we’d like to ignore is being used by some wunderkind Down Under to cure cancer. We all have access to the thing that cures cancer. Or something like that. Or rather, no. It’s nothing like that.

Read these or don’t, can’t say you didn’t get the chance: To varying degrees, these are all hype headlines. The articles themselves vary in quality and detail. They all contain elements of the mythology that makes them sharable. The UNSW headline is especially egregious, it’s a university for fuck sake have some standards.

- He Solved His Dog’s Cancer: Three AI Models Helped — Forbes

- An Australian tech entrepreneur used AI to help create the first-ever bespoke cancer vaccine for a dog to treat his beloved pet Rosie — Fortune

- ChatGPT and AlphaFold Help Design Personalized Vaccine for Dog with Cancer — The Scientist

- Meet the man who designed a cancer vaccine for his dog — UNSW

What actually happened is slower, messier, and much less cinematic. It’s not even a particularly good Netflix series. Multiple rounds of conventional treatment didn’t work. The dog’s owner, with access, privilege and resources, pushed further into the system rather than bypassing it. DNA sequencing, researchers, lab work, a bespoke mRNA construct manufactured by specialists (not bots), layered with another form of immunotherapy. Ethics approvals. The AI is there throughout, but as a tool in the process, helping navigate research and make sense of data, not designing, manufacturing, or delivering treatment. The result isn’t a cure. It’s a partial response. It’s a treatment. Uneven, uncertain, and still unfolding.

That version of the story doesn’t travel. First off, it’s too complicated. Secondly, there’s no tidy hero element. No archetype pulled from the offspring of an Ayn Rand character template and a Robert F. Kennedy health policy. There’s a reason that Elizabeth Holmes conned people for so long. We’re conditioned to believe in unicorns. It slots neatly into something older. The founder myth: The outsider who breaks through where experts failed. The idea that you don’t need institutions, just ingenuity and the right tools. Being a drop out is even better. Not knowing the field is somehow an advantage, not a limitation. We want the dropout to win. It’s a comfortable tale because we’ve seen it before, in different forms, attached to different sectors, technologies, selling different shortcuts. The thing about unicorns, though, is that they aren’t real. It’s a team of special effects people.

“When an Australian tech entrepreneur with no background in biology or medicine said ChatGPT helped save his dog from cancer, the story couldn’t help but spread, wrote Robert Hart in The Verge. “It’s the kind of validation Big Tech has long craved: proof that AI will revolutionize medicine and take on one of its deadliest diseases. The reality, as usual, is more complicated.”

That Verge article gets it. “Not only was Rosie not cured of cancer, it’s not clear the mRNA vaccine was responsible for her improvement.” But it’s lobbing the truth bombs on the wrong side of a paywall. Misinformation runs free online while facts, context and details often need a monthly credit card payment. But even when an article isn’t paywalled, there’s increasing tendency to share before reading. A person could take that Verge article url and knock it into archive.ph and see the whole thing. But who knows that? How many people will do it? How many people will see the article at all compared to the more SEO tasty clickbait headlines that conform to our mythologies about tech founder genius? The funnel chart narrows pretty fast.

As is the custom on LinkedIn, it became fodder for everyone’s personal TED Talk script in the form of very long posts, often with single-sentence paragraphs. “This sounds like science fiction… but it actually happened,” wrote one person. “This is what can happen when a data scientist refuses to give up on his dog,” gushed another. Sorry folks, not this time.

“ChatGPT did not design or create Rosie’s treatment; human researchers did. At most, the chatbot served as a research assistant helping Conyngham parse medical literature — impressive, but a far cry from the breakthrough implied.”

— Robert Hart, The Verge

This isn’t about AI. It’s about belief. Right now The Discourse is fermenting. AI enthusiasts are banging the drum. Utopia is nigh! AI bashers are pointing out that the hype machine has its new poster critter. It’s not that these technologies aren’t useful in medical research, they demonstratively are: “These technological innovations not only improve vaccine design but also enhance pharmacokinetics and pharmacodynamics, offering promising avenues for personalized cancer immunotherapy.”

Humans don’t do lossless data compression. Information drops. It goes like this… Some event happens, a medical or technical breakthrough of some kind, let’s say. It’s complicated and contingent. Institutions frame it through teams of reviewers, cautiously, but optimistically. Companies try to leverage it for shareholder value. Media compresses it into something clickable to trigger as many monetisation scripts as possible before page exits hit. Social platforms format it into something that propels engagement and reduces departure. And then people take it, reshape it, and pass it on again for whatever reason. At each step, something is lost in a sort of social web non-random natural selection process. Nuance, complexity and uncertainty drop out of the pool early. Collaborative efforts are recessive, hero elements are dominant. What remains is the part that travels. To understand why it works this way, read fewer blog posts on social media engagement strategies and pick up some Joseph Campbell.

This isn’t Cambridge Analytica shenanigans. Those happen but they’re something else. This is default mode transmission: It comes with each transaction. The tools are technical, but behaviour is human. It doesn’t just spread information, it reshapes it into something that can move faster with each share that gets reshared. And in doing so, it often removes the parts needed to understand whether it’s true. It’s not necessarily false, but it’s often not accurate. And it’s optimised for people to be wrong.

“So much of our past has been shaped by this petty proceduralism. You could draw a straight line between an amendment in Brussels and a mass grave in Kazakhstan.” — Molly Crabapple, ‘Here Where We Live is Our Country’

A lot of banging great lines in this book!

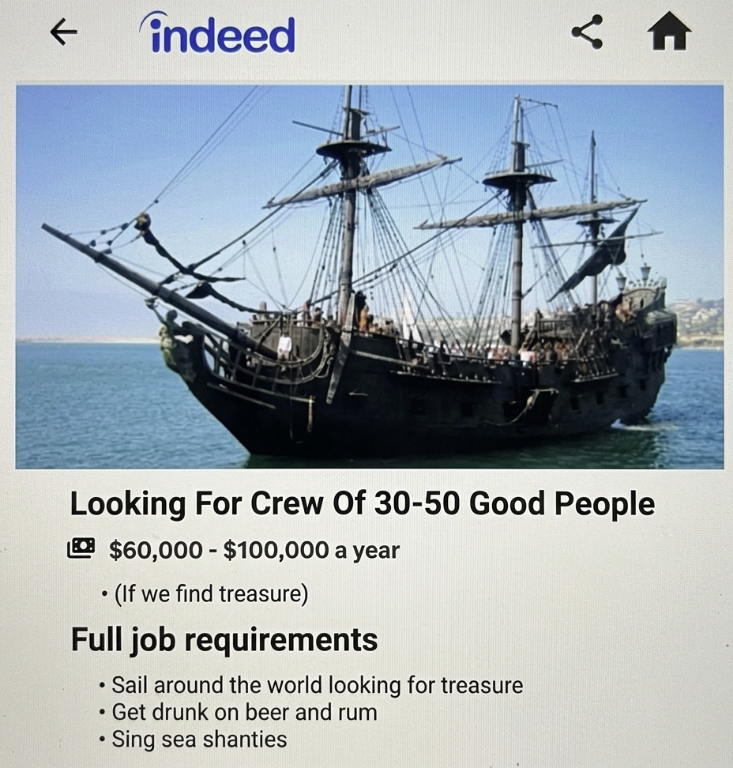

AI can take all the serious jobs and do whatever with them if we can just have these kinds of opportunities in exchange.

Wild Geese, by Mary Oliver

You do not have to be good.

You do not have to walk on your knees

for a hundred miles through the desert repenting.

You only have to let the soft animal of your body

love what it loves.

Tell me about despair, yours, and I will tell you mine.

Meanwhile the world goes on.

Meanwhile the sun and the clear pebbles of the rain

are moving across the landscapes,

over the prairies and the deep trees,

the mountains and the rivers.

Meanwhile the wild geese, high in the clean blue air,

are heading home again.

Whoever you are, no matter how lonely,

the world offers itself to your imagination,

calls to you like the wild geese, harsh and exciting -

over and over announcing your place

in the family of things.

It strikes me that increasingly in the world it is becoming harder – that there are more people who are not really critically aware of the forces that are shaping them. That’s what most people are feeling today – and that’s the goal. That’s what authoritarian regimes do. — Raoul Peck, director of Orwell: 2+2=5

The Analytical Engine has no pretensions whatever to originate any thing. It can do whatever we know how to order it to perform. It [cannot] anticipat[e] any analytical relations or truths. Its province is to assist us in making available what we are already acquainted with. — Ada Lovelace, on AI in 1843

More notes on sovereignty

Consider this post to be the afterbirth of the last one. It’s the bits and pieces I couldn’t cram into the last one, which was already haemorrhaging asides, segues and diversions. As such I’ll not even try hard to connect the dots presented this time.

I thought I was done with the sovereignty posting. There are other things I want to get onto. Other half-baked rants, sketchy musings and dubious epiphanies languish in draft mode. But I blog far slower than the world moves. I still had notes I couldn’t cram into the last post. Still a few tabs open I couldn’t bring myself to exile into the cold, forgotten but persistent realm of my browser history without processing them somewhere. This is somewhere.

This one’s about another kind of digital sovereignty. The personal kind. No, this isn’t about the weird far-right movement that spawned the Nick Offerman movie. Though there are lessons in that which may cross-pollinate here. There’s this notion that people have developed: Jurisdiction curation.

Companies are noticing. It’s becoming marketing hype lexicon. LinkedIn is filthy with tech ads suddenly promising everyone their own private sovereignty in the cloud.

Some FOSS developers are getting in on it.

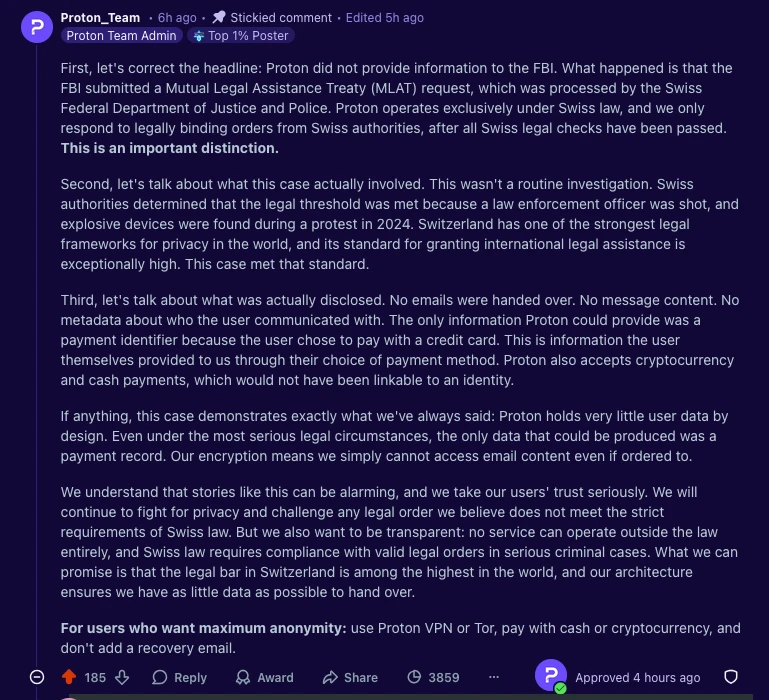

But everything in a cloud is just on someone else’s machine, and machines are owned by people who are subject to laws. Consider Proton. Proton sells Switzerland as part of its privacy promise. Neutrality. Mountains. Banking secrecy. Nazi gold. A jurisdiction imagined as immune from foreign power. People fill in the gaps themselves. Reality fills in the gaps as well. Guess which one wins.

Switzerland participates in the same legal mesh as everyone else. Mutual Legal Assistance Treaties (loads of these). Cross-border warrants. Structured cooperation between prosecutors who don’t care about marketing copy. Five years ago, Proton logged and handed over an IP address tied to a French climate activist after a Swiss order. More recently, payment data tied to an anonymous account was sufficient to unmask a protester in the U.S. for the FBI.

Proton’s defence is correct. Like something a lawyer would write. They minimise retained data, they can’t decrypt message content. None of that contradicts the outcome. They didn’t “help the FBI.” They complied with Swiss law… which helped the FBI. “We only respond to Swiss authorities” is accurate. It’s also doing a second job. Swiss authorities are exactly how foreign requests arrive.

“It is true that Proton is located in Switzerland and responded to a legal request from the Swiss authorities. But it is also true that most people do not know what an MLAT is and there is a widespread misunderstanding that using Proton will protect your account from US govt requests.” — Eva Galprin

Jurisdiction isn’t a magical barrier developed by the Elves around Rivendell. It’s a routing layer. You aren’t choosing whether law applies. You’re choosing which legal process applies first, and under what thresholds it hands off to another one. It’s a friction point. Stick this in your threat model: Navigating tech sovereignty is only going to be harder in this emerging world of backyard hegemonic geopolitics.

Still, we’re not all dodgy billionaires trying to remain afloat in international waters. Sovereignty is the new flavour for us common folk, too. There are plenty of valid reasons to move your stack out of an adversarial jurisdiction. The recent surge in “leave Big Tech” guides makes that clear, from mainstream pieces to a growing number of personal migration logs documenting the process in detail.

- The Guardian — Leave Big Tech behind! How to replace Amazon, Google, X, Meta, Apple – and more

- Coinerella — Made in EU: It was harder than I thought

- Zeitgeist of Bytes — Bye Bye Big Tech: How I Migrated

- Disconnect Blog — Getting off US tech: A guide

These are all good posts for those wanting to migrate their bits. The change they reflect is the impetus. These kinds of guides used to be about switching to open source. Now they’re about geography. The Faustian bargain trade-offs return when you go indy. Missing features. More payment gateways. Interoperability gaps. The quiet reintroduction of complexity that hyperscalers spent decades covering with UI development and SSO paths.

It’s still probably worth it, but what emerges is less of a clean break and more of a negotiated position. It’s partial exits, and hybrid stacks with work-arounds. It looks less like independence and more like jurisdiction curation. If you’re not escaping entirely, at least you’re selecting your dependencies. Maybe that’s enough for the period we’re in. For now. Or maybe not. We’ll find out. These are exciting times.

You can’t unscramble the egg and put it back in its shell. Both national and individual attempts at gaining technological “sovereignty” (or independence, or whatever) run up against the fact that we’ve already made the omelette. Yeah, I’m mixing a lot of metaphors. I’m not un-mixing them. It’s too late. Go with it. Consider torrents, Hollywood, Bollywood, the IIPA, and DNS (you didn’t see that coming).

Christian Dawson, Executive Director of the i2Coalition, recently wrote about anti-piracy enforcement rulings in the Delhi High Court that go beyond holding websites violating copyright laws accountable, or instructing Indian ISPs to block them, and are now green-lighting legal action against domain registrars. The International Intellectual Property Alliance (IIPA) praised the court, Dawson noted, claiming hundreds of piracy sites have already been wiped from the internet as a result. This enthusiasm was share shared by many other U.S. rights holders, who have welcomed India’s approach as a model for tackling piracy globally.

“If you stop there, India’s approach sounds like a win because the temptation is to measure success in takedown numbers” wrote Dawson. “But enforcement architecture matters more than weekly disruption statistics. If the structure being built today erodes jurisdictional limits, proportionality, and procedural safeguards, rights holders may find that the same tools celebrated in one case become destabilizing in many others.”

“Are we prepared for a world in which a single national court can, under its own standards of proportionality, functionally shape global DNS operations through orders directed at infrastructure providers, including foreign companies? Because once that door is open, it does not stay limited to piracy. It never does.” — Christian Dawson

Where’s your sovereignty sit within any of that?

The urge to flee is growing more common. Migrants want to be where it’s safer. Don’t we all? What are they fleeing in this digitised context? No one before the start of 2025 talked about internet sovereignty outside of niche academic circles or the deepest pits of policy wonkery. Now it’s the stuff of blogs and launches hundreds of LinkedIn hot takes on a daily basis. What changed? America did.

Under the Trump administration the DOJ is ignoring the Privacy Protection Act to go after journalists it isn’t happy with. Congress is working across the aisle to abolish anonymous speech on the internet and speed through legal mechanisms that would open the floodgates for unprecedented levels mass surveillance and censorship.

And then there’s just stuff like this I can’t quite categorise, in which people are now looking at how to nip in the bud the worst possible elements of the forthcoming nightmare existence they see coming around the bend…

The [Washington] state Senate unanimously passed legislation Wednesday that prohibits employers from requesting, requiring or encouraging employees to have microchips implanted in their bodies. The bill previously passed the House of Representatives on an 87-6 vote last month. It now heads to the governor’s desk for final approval. — The Seattle times

This is all about fleeing the U.S. Even if you’re not in it, your bits probably are, at least to some degree. “Day by day, the United States is becoming more overt in using its economic and technological influence against its adversaries,” writes Reem Almasri, “which makes the question of independence from dominant US technology companies increasingly urgent.”

“The United States has become the world’s biggest bully, threatening any country that doesn’t do as it demands with tariffs, and its tech companies are taking full advantage by flexing their muscle and trying to avoid effective regulation around the world. The drawbacks of our dependence on US tech companies have become more obvious with every passing year, but now there can hardly be any denying that where we can pry ourselves away from them, we should make the effort to do so.” — Paris Marx

There’s good reason for all this distrust. But the problem is thornier than it might appear. American infrastructure is deeply entangled in the services we use every day. It isn’t always transparent about where it sits. The Dutch government commissioned a legal review of Amazon’s “European Sovereign Cloud” (marketed as sovereignty-friendly) only to quietly pull the report after experts pointed out all the gaping holes and risks. It doesn’t matter where the data centre sits or who staffs it. If the provider is an American company then the U.S. has a long arm of the law to compel access or suspend services entirely.

“The technology is delivered as a black box. To ensure the security of government data, you want to check at the source code level for backdoors.” — Nitesh Bharosa, Professor of Government Technology, Delft University of Technology

The opacity is the problem. Even a government department actively trying to assess the risks couldn’t see inside the product it was evaluating. For individuals, the challenge is even greater. American (and other) cloud infrastructure hides inside apps, services, and platforms in ways that are rarely disclosed and almost impossible to audit.

Writing in Sovereignty for Sale, Sam Freedman argues that this dependency is no longer just a commercial inconvenience. A case in point: Elon Musk’s decision to restrict Ukraine’s use of Starlink terminals showed how control over foreign-owned technology can alter the course of a war. For anyone — individual or nation — relying on infrastructure they don’t control and can’t inspect, the risk is real, even when it’s invisible.

My point — and I do have one — is that we need another way. Signal is a U.S. based platform, but I trust it, for good reasons. Telegram has a global network of servers and is based in Dubai. For good reasons no one should trust it as a secure messenger. I see your jurisdiction, and raise you a security posture, protocol selection or technology architecture that renders it moot.

One size never fits all. This isn’t off-the-rack on the high street. That’s what you were escaping, remember? For file storage, encrypt locally before anything touches a cloud. Your keys, your ciphertext, nobody else’s problem to solve. For email, client-side end-to-end encryption means mathematically unreadable, not “we promise we can’t read it” unreadable. For social, federated protocols like ActivityPub mean your identity isn’t owned by a single company in a single jurisdiction that can be leaned on. For web traffic, encrypted DNS stops queries being snooped at the network level, where a lot of quiet surveillance actually happens. For messaging, the question is how useless that encrypted blob will be when a subpoena lands and metadata was never logged to begin with.

What if it’s building blocks instead of borders? Open protocols offer part of our third path. They invite participation in shaping them rather than simply consuming. They’re transparent instead of uninspecticable intellectual property. And that’s the rub. We’ve been trained by decades of internet concentration and user interface design to see it purely within consumer contexts instead of a series of technical decisions that have consequences, trade-offs and alternatives. You don’t solve all of these things with a re-occurring monthly ding on your credit card. You can’t subscribe your way to safety.

“The sovereignty offered by this approach is not about ownership, but about institutions understanding how their systems work, being able to participate in them, and retaining the option to move or adapt if needed.” — Kelly Roegies

That last part is the key. The goal isn’t to build a bunker. It’s to avoid being trapped. We can’t unmake the omelette. We can only use it as an ingredient to mix into something new. It’s not that your data location isn’t relevant, of course it can be. It’s just that it’s far from the only ingredient that’s relevant.

Sovereignty ≠ Freedom

On sovereignty and freedom and the internet

I was getting ready to hit “publish” on this post at the weekend. But then the world changed… again. So, I put this draft down for a few days and switched back to my current day (sometimes night) job. Now it’s Wednesday. Or Thursday. Time’s a blur.

This post started out to be about internet sovereignty. It’s a topic keeping way too many tabs open in my browser these days. The war the Trump Administration chose to start in Iran at Israel’s request — yeah, I said what I said — is about physical sovereignty. Borders. Airspace. Territory. Kinetic force. But there’s no tidy border between these two realms.

Another sovereignty dispute unfolded in Wash., D.C. where noted town drunk Pete Hegseth issued Anthropic’s CEO an ultimatum: give his Department of War unrestricted access to Claude or risk being declared a “supply chain risk.” Or be compelled under the Defence Production Act to tailor the model to military needs. Anthropic’s red lines were clear — no domestic mass surveillance, no autonomous killing without meaningful human oversight. On CNN Trump claimed he had banned government use of the “radical left” AI. The military used it anyway. The technology was already embedded. Capacity is policy.

Consider the fast-tracked debut in Iran of new, low-cost loitering munitions — a ‘suicide’ drone platform accelerated from unveiling to battlefield in just months… not years, because that’s how things work now.

Not everyone in Silicon Valley (colloquially geolocating) wants to end up on the wrong side of history. Employees at Google and OpenAI published an open letter warning that the Pentagon was attempting to force AI companies to compete over who’d relax safeguards the fastest. The fight’s been framed as patriotism vs. principle. It’s more accurately a contest over who governs machine intelligence and to what ends.

These aren’t separate stories. They are the same one. And that brings us full circle. Everyone’s pissing to mark territory, and more splash is hitting internet policy.

‘Good fences make good neighbors.’

Internet freedom, as a policy focus, died, quietly at home, surrounded by loved ones. It’s no mystery, we know what killed it. Attending RightsCon in 2025 was like being at its wake. People reminisced. The food was good. Now internet sovereignty is rising quickly in its place. Funding is even surfacing around it. They aren’t the same thing.

For a generation, the internet was mostly narrated as a sprawling borderless commons. In 1996, John Perry Barlow declared cyberspace independent of governments, a realm where sovereignty dissolved into protocol and participation. Good times. That mythology held long after it became obvious it wasn’t true.

The early governance model worked not because power was absent, but because it operated in the background. Root servers, backbone infrastructure, cloud platforms and semiconductor supply chains were overwhelmingly anchored in the U.S. The system functioned because that dominance was exercised with restraint. Sovereignty was invisible because it didn’t need to announce itself.

“Washington need not seize the DNS or nationalize infrastructure to shape global outcomes for instance. Influence is embedded in architecture: jurisdiction over key firms, extraterritorial enforcement of domestic law, sanctions regimes, export controls on advanced chips and AI models, and the policy alignment of globally dominant companies.” — Konstantinos Komaitis

That restraint became a competitive advantage. U.S. hyperscalers didn’t just sell compute and storage, they sold confidence in the rule of law underpinning them. Trust was the product. But trust isn’t structural. It’s contingent. And it’s ephemeral.

“As the footprint of these hyperscalers has increased, policymakers in Washington have found ways to serve U.S. foreign policy goals by weaponizing this digital infrastructure. In response, other nations have increasingly sought to reduce their dependence on the U.S. for their critical digital infrastructure. — Kat Duffy

When U.S. sanctions led to the suspension of ICC prosecutor Karim Khan’s Microsoft email account, the abstraction collapsed. It wasn’t DNS. It was an angry America. The invisible sovereign became visible.

Europe has noticed. A Wall Street Journal headline captured the shift bluntly: Europe prepares for a “nightmare scenario” in which the U.S. blocks access to critical technology. A decade ago, that would have sounded conspiratorial. Today it’s contingency planning.

The U.S. had been seen for decades as a stable steward of a vast terrain of the internet’s core infrastructure. That assumption is eroding. Access can be weaponised. When Washington demands compliance, companies within its jurisdiction comply. The U.S. once invested hundreds of millions into the internet freedom model — decentralisation, interoperability, tunnels, proxies, encryption, encryption, some more encryption. The U.S. is now more interested in something else.

As confidence falters, trust migrates inward. Money follows, moving away from circumvention tools and open protocols and toward national AI models, domestic clouds and state-controlled routing capacity. This is less a technical shift than a transfer of power from users to states and from global networks to firewalls that increasingly resemble national borders.

Sovereignty as insurance policy

Across Europe it’s all kicking off. Procurement teams are weighing jurisdictional risk against cost and convenience. France announced that 2.5 million civil servants will migrate away from Zoom, Teams and Webex toward a French-built platform hosted on national infrastructure. Austria’s armed forces is shifting thousands of workstations to LibreOffice and Nextcloud. Germany’s Schleswig-Holstein is moving 30,000 government PCs from Windows to Linux. Danish regulators are scrutinising Google deployments in schools under GDPR exposure. Switzerland’s ETH Zürich and EPFL released Apertus, a fully open large language model trained on domestic infrastructure. Spain’s ALIA initiative is building open multilingual models under national supervision.

Many of these substitutions do involve FOSS systems, but the primary driver isn’t an ideological commitment to open source. It’s reducing reliance on American hyperscalers. Now the calculus is leverage, sanctions risk and supply chains.

At an EU Open Source Policy Summit, Ruth Suehle argued that “digital sovereignty doesn’t mean a digital fortress. It means openness, and options, not being locked in.” That is the aspiration. Sovereignty as diversification. Sovereignty as insurance policy. That seems nice.

Sovereignty begins as hedging behaviour. It rarely stays there.

The walls go up faster

“None of you seem to understand. I’m not locked in here with you. You’re locked in here with me!” — Rorschach, Watchmen

In authoritarian contexts, digital sovereignty isn’t an insurance policy. It’s an instrument of control.

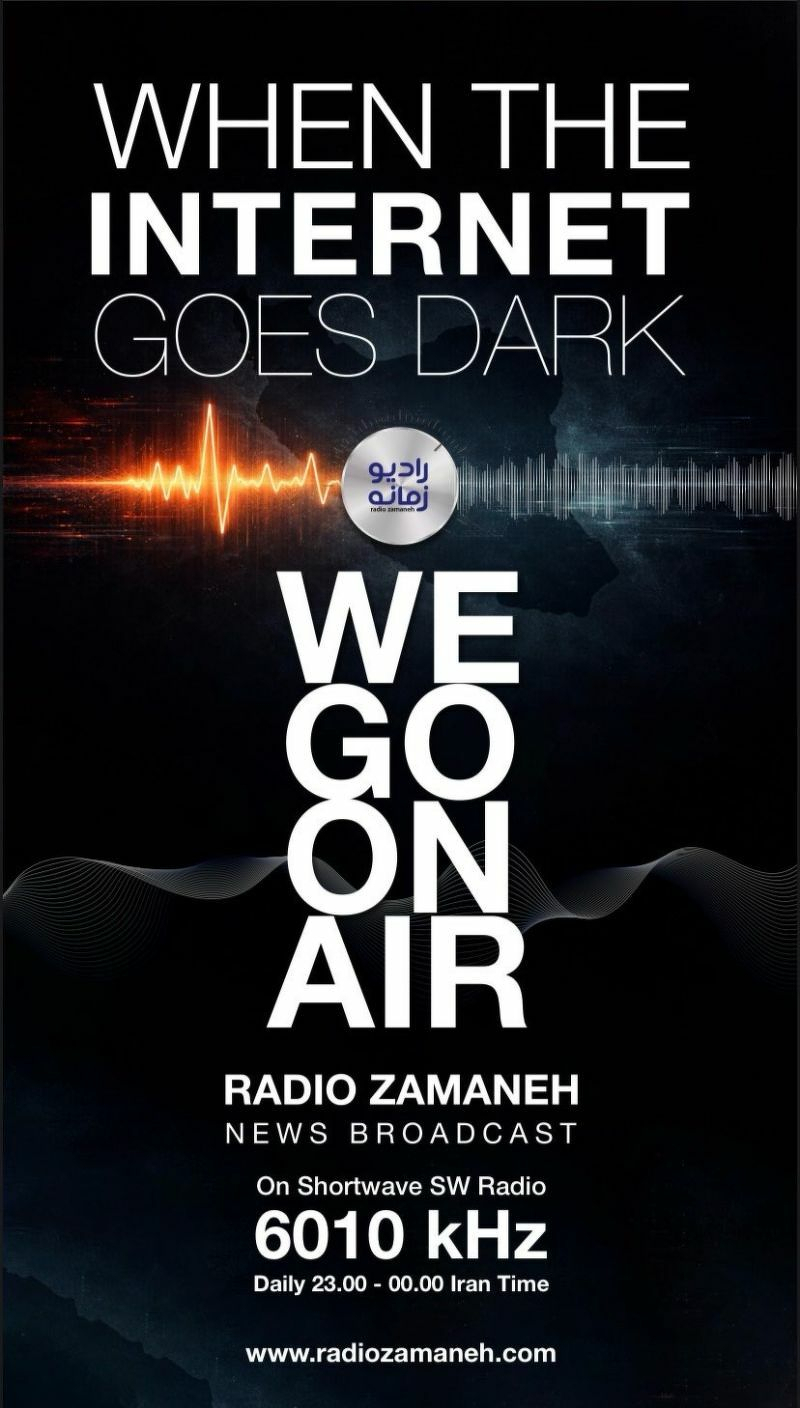

Iran’s National Information Network and its recently approved “electronic passive defence” doctrine formalise the ability to disconnect external networks during “crises.” Shutdowns are engineered in advance. Spectrum becomes sovereign terrain.

China’s doctrine of cyber sovereignty layers law, licensing, gateways and deep packet inspection into a coherent architecture. Through companies exporting firewall technology, it offers sovereignty as a service to other autocratic and closed regimes. When filtering infrastructure is built and maintained by foreign firms, sovereignty becomes outsourced control.

Flexing the sovereign muscle takes subtler forms in democracies. The United Kingdom’s rollout of age verification requirements led to predictable VPN surges. The response is to consider regulating VPNs. Control begets circumvention. Circumvention justifies deeper control. On it goes.

Among allies, sovereignty now collides. The U.S. is launching a government portal intended to help Europeans bypass domestic content restrictions. Circumvention reframed as foreign policy. The splinternet is not merely fragmentation between blocs. Shutdowns are getting cheaper and easier to impose. Fragmentation is increasingly deployed for political ends.

Where freedom and sovereignty collide

“Before I built a wall,” Robert Frost wrote, “I’d ask to know what I was walling in or walling out.” The question rarely features in policy briefings.

Freedom House warned that the future of internet freedom would depend on how governments deploy incentives and controls over the next wave of technological innovation. Many are deploying it this way: “Sovereign AI development in authoritarian contexts is likely to advance ongoing efforts to wall off the domestic internet from global networks, often referred to as ‘cyber sovereignty.'”

Sovereignty language proliferates discourse. At SplinterCon in Paris, the theme was sovereignty: autonomy or isolation. At RightsCon in Taipei, a session centred on undersea cables and the island’s digital resilience. Indigenous communities articulated digital sovereignty as cultural survival. 7amleh launched #ReconnectGaza, which is very much based on Palestinian telecommunications sovereignty.

“Gaza is one of the last places on earth with 2G. Regular access to fibre options, essential for modern telecommunications, has been restricted too, and any international connectivity has been channelled through Israeli-controlled networks, leaving Gaza’s connections vulnerable to intentional disruptions. — #ReconnectGaza

Internet freedom assumes permeability. It’s outward-facing. It’s not just some circumvention tools; It’s connectivity as a universal right. Sovereignty framing is conditional on context. For Palestinians, it’s access to the outside world, free of an occupier’s surveillance. For the Turkmenistan government, sovereignty is a tightly controlled state internet blocking external traffic getting in and internal traffic getting out.

Freedom protects users from states. Sovereignty aims to protect states from each other. There are good elements in some sovereignty initiatives. Data protection matters. Transparency matters. Supply chain resilience matters. But sovereignty is a poor substitute for internet freedom. In many ways, it’s a retreat. The world now wants to put its borders online.

“Something there is that doesn’t love a wall.” Users route around filters. Signals seek paths. Even in blackout, packets move. I still want to believe. But We’re in “mending season.” Governments “walk the line and set the wall between us once again.” The question isn’t whether sovereignty will shape the network. It already does. The question is what we’re walling in as we build, and what we’re keeping out.

Radio Zamaneh airs every night from 23.00-00.00 Tehran time with a domestic and international news bulletin and analysis on SW 6010 KhZ.

The radio is paid from donations, made in any currency and is supported by a 501(c)3 fiscal sponsor for US-based donors.

Clouds without rain

These are notes. The triggering MacGuffin: I was recently asked to take part in an upcoming set of workshops. In general terms it’s around what happens when rapid AI infrastructure expansion collides with accelerating climate stress and other emerging security dynamics that make up the whole shit show of our times. I said sure. Of course I did, why not? It’s the opportunity to bring both my work and my doomscrolling together in some online panels.

It wasn’t a formal brief. Just an invitation to grapple with a question that is equal parts exploratory, open-ended, and deceptively simple. I’ve already started mulling it. On the surface it seemed like a narrow infrastructure question: water use, data centres, local protest. And on and on. But the more I mind-map the threads, the less contained it becomes. Water scarcity pulls in sovereignty disputes. Sovereignty disputes pulls in grievance. Grievance pulls in mobilisation, disinformation, extremism. I’m down rabbit holes. The deeper I’ve gone, the clearer it becomes: this isn’t about some servers. It’s not about the tech stack that constitutes what we call “AI.” It’s about compound pressure building inside already fragile systems. Everything is thirsty.

Bonfires instead of clouds

Everyone keeps talking about artificial intelligence like it floats in the cloud. The cloud has always been a horrible analogy for serverless computing. It invokes the idea of a happy replenishing loop — A hydrologic cycle — in which evaporation leads to condensation which leads to precipitation. That’s not how this works. It’s extractive, not replenishing. It runs on water. It runs on land. It runs on rare earth minerals pulled from the earth by environmentally devastating practices, or sometimes by children forced into the work to fund some side in a civil war. It runs on electricity sequestered from fragile grids in places already running at capacity. And as climate stress tightens its grip, AI infrastructure isn’t just expanding. It’s inserting itself directly into water-scarce, politically decaying regions — and pretending it’s neutral. It isn’t neutral. A natural cloud is neutral. This is combustible. It’s bonfire fuel.

Climate stress Is just the spark

Let’s kick off with some basics. Let’s start with drought, with heatwaves, with fire. Throw in energy volatility. Add generous portions of inflation and a cost of living crisis. Climate stress increases scarcity. Scarcity sharpens questions nobody wants to answer: Who gets water? Who gets power? Who absorbs the externalities? Who profits? You can already hear how that call-in show on the AM dial sounds, the one that you listen to on long road trips in areas where the radio doesn’t pick up anything else. When you’re not listening to podcasts.

Drop some hyperscaler’s data centre into that equation — a facility that consumes millions of gallons of water and enormous amounts of electricity — and you’ve just turned background tension into a visible symbol. Data centres are quiet, windowless, secretive. They hum. They don’t explain themselves. They don’t look like hospitals or schools. They look like extraction. That makes them narratively perfect.

Scarcity becomes the story

Once scarcity becomes visible, it becomes political. Water restrictions hit households and farmers. Energy prices spike. Meanwhile, a massive AI facility continues operating behind fences. It doesn’t matter whether the water accounting is technically defensible. In conditions of stress, perception outruns spreadsheets. That’s when mobilisation begins.

Environmental grievance. Anti-capitalist anger. Anti-technology backlash. Sovereignty disputes. Indigenous land rights conflicts. “AI colonisation.” “Water theft.” “Corporate takeover.” Some of these grievances are legitimate. Some are opportunistic. Some are engineered. Extremist ecosystems don’t care about the distinction. They care about narrative density. And nothing generates narrative density like visible scarcity plus opaque infrastructure. There are still things to smash even when the building has no windows.

The narrative battlefield

Here’s the part policymakers underestimate: data centres are easy to mythologise. They are technically complex but visually simple. That makes them ideal vessels for conspiracy and accelerationist framing. There was a whole movie about it.

Eco-fascists can weaponise water scarcity. Anti-technology movements can cast AI as civilisational decay. Far-right groups can fold local grievance into broader anti-globalist rhetoric. Disinformation actors can seed stories about contamination, secret surveillance, or “elite water pipelines.” The more technical the infrastructure, the easier it is to distort.

Mobilisation rarely begins with sabotage. It begins with a story. That story becomes outrage. Outrage fuels targeting.

Targeting prefaces sabotage

This is the stage most security planning ignores. When mobilisation escalates, it doesn’t jump straight to cutting cables. It starts with people. Local officials negotiating permits. Tribal leaders weighing partnerships. Journalists reporting on water allocations. Employees working inside the facility. Doxing. Harassment. Phishing disguised as activism. Insider recruitment framed as moral resistance. Coordinated smear campaigns. Manufactured leaks.

Eventually there is a memo. Facility hardening increases. Guards get hired. Perimeter fencing improves while legitimacy erodes. And once legitimacy erodes, insider risk grows. Cyber risk grows. Physical risk grows. Because the escalation pathway isn’t linear. It’s cumulative.

Compound risk is the replenishing loop

Remember the happy replenishing loop we already dismissed? Here’s where the cyclical system lives. It’s not happy. Climate stress increases scarcity. Scarcity increases grievance. Grievance gets captured and amplified. Narrative warfare increases hostility. Hostility increases targeting. Targeting increases cyber, insider, and physical risk. Then a disruption happens — a ransomware attack during a heatwave, a shutdown during drought restrictions, a clash at a protest — and the disruption itself becomes proof of the grievance narrative. The loop tightens.

Indian Country as a flashpoint

The Smithsonian’s National Museum of the American Indian has one of my favourite restaurants in Washington, DC. That’s not the only reason to visit. It also has the definitive exhibit detailing every treaty between the U.S. and Native American tribes, including all the broken ones. There are a lot of broken ones. The Trump regime is reviving that tradition. Columbia Basin Salmon Agreement canceled. Tribal Food Grants canceled. Climate and Green Energy Funding, cut. He once tried to revoke the reservation status of the Mashpee Wampanoag Tribe. Meanwhile, the federal government dangles shiny new treaty offers. The federal government now wants tribes to make deals to develop crops of data centres. That means leasing land or selling power. It means diverting water. The US Department of Energy apparently has a whole webinar about it. This is one particular rabbit hole I’ve stayed in for a while. In parts of the American West, the future of AI is being routed through Tribal lands. It’s already planned. The asking comes as an afterthought.

Federal agencies frame data centres as economic opportunity. Partnerships promise revenue, energy sales, infrastructure investment. But in water-stressed states with contested sovereignty histories, the stakes hit different. Land and water are not just commodities. They are treaty rights. They are cultural survival. That means disputes over AI infrastructure are never just about cooling systems. They are about sovereignty, extraction history, and trust. External actors, the extremist groups, disinformation networks, political opportunists — the various grievance entrepreneurs — will not ignore that. They will exploit it.

As I click through various news and papers on federal government promises and Tech hyperscaler plans already in progress I just think back to the Dakota Access pipeline protests at Standing Rock. This rabbit hole goes back even further. It goes back centuries. But I digress. Kind of.

The Illusion of technical neutrality

The industry still talks as if AI infrastructure is apolitical. It isn’t. It never is. It is being built in regions already strained by drought and inequality. It draws heavily on shared resources. It often operates with limited transparency. It depends on stable grids in an era of instability. And it is emerging at the same moment as mass distrust in institutions. The very software that requires these vast fields of data centres is built to fabricate. It’s built to lie. Another feedback loop in a volatile mix of them.

The blind spot

Most resilience planning focuses on the facility: redundancy, cyber controls, perimeter security, OT segmentation. But the escalation usually starts with legitimacy failure. If communities believe water is being diverted unfairly, if leaders feel pressured or silenced, if activists are harassed or co-opted, the social environment around the infrastructure becomes hostile. In hostile environments, security costs rise. Insider risk increases. Attack surfaces widen. Infrastructure does not exist outside politics. It sits inside grievance.

Here it is

My point — and I do have one — is that dropping AI infrastructure into the middle of fragile systems isn’t just an environmental issue. It’s a conflict multiplier. Climate volatility raises scarcity. Scarcity raises grievance. Grievance fuels narrative capture. Narrative capture increases targeting. Targeting destabilises both people and infrastructure.

And when instability sets in, everyone will claim they’re surprised. They shouldn’t be. This cloud doesn’t rain. It sucks the water out and doesn’t give it back. And water is running out.

Links and such

I caught the latest episode of the ‘This Is Not A Drill’ podcast at the weekend, in which its host Gavin Esler interviewed Alex Hern, the AI writer at The Economist. It was like they were eavesdropping on my internal monologue. Give it a listen.

Informing parts of this post (not exhaustive)

- Dakota Access Pipeline: What’s at stake? (CNN) Remember this?

- What to know about the National Museum of the American Indian amid Trump’s Smithsonian review (USAToday)

- As Trump cancels Columbia River deal, promises to Indigenous American tribes are still being broken (Idaho Capital Sun)

- The local and global environmental footprint of the AI-driven boom in data centers (London School of Economics and Political Science)

- Tribal Digital Sovereignty: How Native Communities Are Powering Their Own Tech Future (Ford Foundation) This isn’t about AI or data centres directly. It’s about technology sovereignty, which none the less relates here, and more and more, everywhere.

- The AI boom is heralding a new gold rush in the American west (The Guardian)

- The Future of AI Runs Through Indian Country (The Payne Institute for Public Policy)

- Data Centers: Exploring the Opportunity for Tribes (The US Department of Energy)

- Proposed Data Centers in Indian Country This is Honor the Earth’s tracking website for “hyperscale data centers on/near Indigenous lands.”

- The No Data Centers on Native Land Campaign An Indigenous women led organisation.

- Decolonizing AI Data Centers: A Critique Through the Native Feminist Lens (UCLA’s Queered Science and Technology Center) This one’s all about the power dynamics.

What happens when Iran turns the internet off?

Yalda Hakim with Mahsa Alimardani, associate director of technology threats and opportunities at WITNESS

For 2026 I decided on some personal Bluesky glasnost to start the year. But there’re no free tools to open the floodgates. Blockenheimer used to do that as well as be a great blocking service, but I noticed it’s dead now. So I made a wee script for it: github.com/drew3000/…

Trump regime just ordered the the U.S. to withdraw from international agreements or work that “are contrary to the Interests of the United States.” Let’s look at what these include:

- climate / environment

- human rights / conflict prevention

- internet freedom / cyber security

- anti-corruption / legal

Insurrection is still a value-neutral term

Insurrection is not a pejorative word. It’s inherently value-neutral. Our opposition or support of one is based on two things: the system it opposes, and whatever it seeks to replace that system with. Given the right context, nearly everyone is for an insurrection somewhere.